Today, I answered my daughter Katy’s ice bucket challenge and am donating to ALS! I also challenged three more people. You now have 24 hours to answer the challenge!

Sunday, August 24, 2014

Saturday, August 23, 2014

Solar Energy That Doesn’t Block the View

I’m really getting excited about the innovations going on in green technologies. This article on see-through solar panels is pretty interesting to me. Combined with solar roadways, we are really on the brink of a revolution in our energy generation capabilities.

From the article, “It is called a transparent luminescent solar concentrator and can be used on buildings, cell phones and any other device that has a clear surface.”

For more info, check out the article:

Michigan State University. "Solar energy that doesn't block the view." ScienceDaily. ScienceDaily, 19 August 2014. <www.sciencedaily.com/releases/2014/08/140819200219.htm>

Monday, August 18, 2014

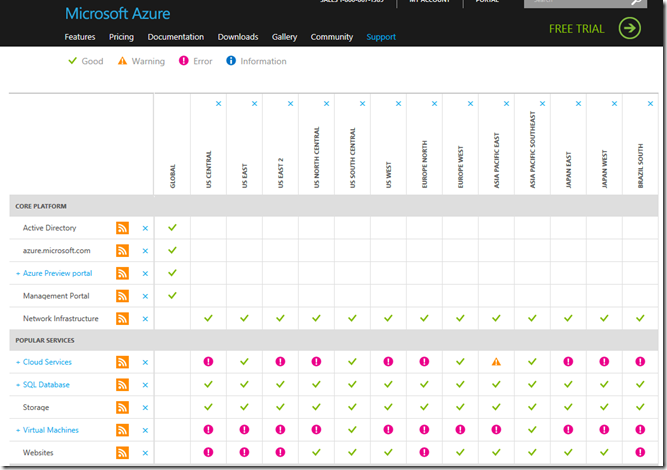

Microsoft Azure Stumbles - Again

I recently wrote about being “In Search of a Highly Available Persistence Solution”. However, it seems that Azure has been experiencing other more sweeping outages and service degradations. PCWorld recently wrote about Microsoft’s woes, “Azure cloud services have a rough week”.

Today, we are nervously watching as the service issues continue. Luckily the outages have not affected our product … yet.

Microsoft is trying very hard to gain ground against rival Amazon. This certainly doesn’t help. To be fair, cloud outages are not uncommon and when they do occur, a lot of people get pissed off. Last year’s InfoWorld article shows that it happens to the best of them.

Monday, August 11, 2014

Microsoft Released Visual Studio Update 3 – Aug 4, 2014

Microsoft released Update 3 to Visual Studio last week. Below is a list of what’s new:

What's new in Visual Studio 2013 Update 3

- CodeLens

- Code Map

- Debugger

- Performance and Diagnostics

- IntelliTrace

- Windows Store Apps

- Visual Studio IDE

- Testing

- Bug Fixes & Known Issues Publish to Stage

Click here for a link to the release notes.

In Search of a Highly Available Persistence Solution

Our cloud-based SaaS offerings are hosted in Azure. Like many applications, some of our apps began life in the era before cloud computing became mainstream. As a result many design decisions had to be rethought. Some involved some rather extreme makeovers just to be able to run there – like removal of CLR stored procedures (gah!) since SQL Azure didn’t support it. Others were more fundamental to multi-tenant apps, but required change nonetheless.

Along the way, we have made numerous changes that brought higher performance, stability, scalability and reliability to the products. Frankly, the Azure compute infrastructure for PaaS is excellent. Combined with Windows Azure Traffic Manager our compute has performed admirably. Scaling is a snap. Deployment is beyond easy. Then we hit the snag. Persistence. State sucks. Compute is easy because it can be treated statelessly; but the persistence layer is another story. If your database goes belly up, you’re dead. Having an offline copy of your database could help, right? But then you think, “How recently did you make that copy?” Or, “What about data loss between backups? My user really wants to see that last transaction!” And once your original database is back online, you ask yourself, “How do you sync up the changes?” Wouldn’t it be great if that copy was a transactionally-consistent copy?

SQL Azure touts high availability in the datacenter through its replicas. Every database is actually implemented as a master and two replicas. Their stated goal is 99.9% availability. On paper, you might think that having two replicas of your data would be sufficient to give you high availability. Our experience is that it is not. They do not offer a cross-datacenter high availability option. In recent conversations with Microsoft, surprisingly they are pointing people towards SQL Server in IaaS for high availability. Their Tutorial: Database Mirroring for High Availability in Azure further supports this view. C’mon guys – you can do better than this! This is a cop out. I want a scalable, highly available, cloud-ready repository.

Edit: The new database SKUs for Azure SQL Database (basic, standard, and premium) maintain “at least three replicas”. Please refer to this overview of the new SKUs for more information. I want to point out that our current product deployments are using the GA SKUs (Web and Business) and not the new SKUs outlined in this overview.

Time for another design check. In reality, what your application must do to get closer to the magical 5 9’s of availability is to move replication logic into the application. If you’re contemplating more than one form of persistence (we are) and if you want high availability (we do), then be prepared to roll your own. A number of architectural design sessions and a review of the options available to the Azure ecosystem makes it clear that they don’t have a complete answer here. It’s not entirely surprising. They give you the primitive infrastructure and framework bits and you do the rest. In this case the platform affords you nothing leaving you to do the rest a.k.a. everything else…

Moreover, if you intend to be a persistence polyglot, your options are complicated. How many of you put everything in the database? Does that image really belong in there? How about those PDFs? How about your application configuration and set up information? Couldn’t blob storage do a much better job of saving that binary data? Couldn’t you use a document database to store that configuration data? Modern enterprise applications have a very diverse set of data and using a relational database for all of it means you’re fitting square pegs in round holes.

A number of design patterns can help you to abstract your application business logic from how you persist your data. You *are* using design patterns, aren’t you? A simple pattern to use is the Repository pattern. Done properly, you can completely hide the complexities of how to persist your data completely from the rest of your application leaving you the option of doing synchronous replication to one or more replica copies of your data, which can help to ensure you have a transactionally-consistent copy of your data. Or you may choose to use asynchronous replication for higher performance at the risk of some small amount of data loss. Both approaches require your application to detect failures and to switch from the master to the replica copy.

Other more complex design patterns can help you with this problem at the expense of complexity.Simplicity is king in software design. Embarking on an implementation of CQRS may leave you wondering why you started in a career of software development. But it can also leave you with a superior solution to a complex problem. Moreover, by implementing this in your application, you can decouple yourself from a platform and its requirements for high availability. Like everything in software development – it’s a balance of choices. Flexibility, simplicity, maintainability, speed to market, performance, etc.

Like some on my team are fond of saying, “If it were easy, everyone would play the game.”

Saturday, July 5, 2014

OneDrive and File Indexing Is Killing My Virtual Machine

I started noticing my VM lagging badly over the last couple of weeks. I frequently notice that especially at start up that the services for Windows Search Indexing and OneDrive Sync Engine are eating up at least 30% of my CPU – sometimes more. And of course it’s banging on my disk as well. For now, I’m going to pause syncing, but I think there’s a more serious issue. I’ve seen other posts online that I’m not alone.

I’m hoping Microsoft will provide an update that will correct this as it is becoming more and more of an impediment.

Thursday, June 5, 2014

Visual Studio 2013 Project Errors

I started getting this error right after trying to add a new project to a Visual Studio 2013 solution:

A numeric comparison was attempted on "$(TargetPlatformVersion)" that evaluates to "" instead of a number, in condition "'$(TargetPlatformVersion)' > '8.0'"

A quick Google search for the error and I find that others have experienced this problem due to Resharper. Sure enough, I have Resharper 8.2 installed and after doing a “Repair” of the install through Control Panel, that cleaned up the problem. It’s not exactly clear what the repair fixes, but whatever, it worked :)

Sunday, June 1, 2014

Solar Freakin’ Roadways!

I haven’t paid as much attention to green technologies as I should have. But the concept of the technology in this video is pretty darned cool. My son showed it to me and while the first part of the presentation is kind of sophomoric, the idea is pretty compelling. I’d be curious to know what the cost for new roads would be and how much it would cost to do upgrades and maintenance in comparison to what it costs to do asphalt. Of course, that’s not a fair comparison, but I know that the average politician or even average person is going to just do the simple comparison and miss the side benefits.

https://www.youtube.com/watch?feature=player_embedded&v=qlTA3rnpgzU

Log4Net, O’ Log4Net, where for art thou o’ Log4Net?

While working on a new set of services, we noticed that our logging was not occurring as expected. We had just wired in our core logging implementation that uses Log4Net. Worse, our services were starting up and then crashing – in the development environment only.

After a bit of debugging I found that the problem was that the log4net assembly could not be loaded. What?! There it sits as expected in the bin directory.But IISExpress said “no way, I can’t load it.” (I’m sure it said something like that) :-)

What was odd was that I was able to put in some debugging code and could manually load a type from the assembly, so something is seriously amiss here. Fusion Log Viewer confirms that something is not quite right with the loading of the assembly:

*** Assembly Binder Log Entry (6/1/2014 @ 9:03:05 AM) ***

The operation failed.

Bind result: hr = 0x80070002. The system cannot find the file specified.

Assembly manager loaded from: C:\Windows\Microsoft.NET\Framework\v4.0.30319\clr.dll

Running under executable C:\Program Files (x86)\IIS Express\iisexpress.exe

--- A detailed error log follows.

=== Pre-bind state information ===

LOG: DisplayName = log4net, Version=1.2.12.0, Culture=neutral, PublicKeyToken=669e0ddf0bb1aa2a

(Fully-specified)

LOG: Appbase = file:///C:/Program Files (x86)/IIS Express/

LOG: Initial PrivatePath = NULL

LOG: Dynamic Base = NULL

LOG: Cache Base = NULL

LOG: AppName = iisexpress.exe

Calling assembly : (Unknown).

===

LOG: This bind starts in default load context.

LOG: No application configuration file found.

LOG: Using host configuration file: D:\SkyDrive\IISExpress\config\aspnet.config

LOG: Using machine configuration file from C:\Windows\Microsoft.NET\Framework\v4.0.30319\config\machine.config.

LOG: Post-policy reference: log4net, Version=1.2.12.0, Culture=neutral, PublicKeyToken=669e0ddf0bb1aa2a

LOG: GAC Lookup was unsuccessful.

LOG: Attempting download of new URL file:///C:/Program Files (x86)/IIS Express/log4net.DLL.

LOG: Attempting download of new URL file:///C:/Program Files (x86)/IIS Express/log4net/log4net.DLL.

LOG: Attempting download of new URL file:///C:/Program Files (x86)/IIS Express/log4net.EXE.

LOG: Attempting download of new URL file:///C:/Program Files (x86)/IIS Express/log4net/log4net.EXE.

LOG: All probing URLs attempted and failed.

We do have a number of other places where background threads run off and do some initialization work, so perhaps the problem lies there? I switched to tracking when the managed exception was thrown and lo and behold here it is…The plot thickens a bit from this point on. The call stack reveals that this is occurring in System.Web.dll.

System.Web.dll!System.Web.Hosting.ApplicationManager.CreateAppDomainWithHostingEnvironment(string appId, System.Web.Hosting.IApplicationHost appHost, System.Web.Hosting.HostingEnvironmentParameters hostingParameters)

Now why would the ApplicationManager be trying to use Log4Net types? Specifically, "Type is not resolved for member 'log4net.Util.PropertiesDictionary,log4net, Version=1.2.12.0, Culture=neutral, PublicKeyToken=669e0ddf0bb1aa2a'."

Alas, after much gnashing of teeth and tearing of cloth, I found this obscure thread (https://www.mail-archive.com/log4net-dev@logging.apache.org/msg04644.html) on mail archive that does explain things. Reading through the comments I found this nugget:

“Sounds like the webhost was creating multiple appdomains and log4net wasn't available in all of them.”

Finally, this quote from the thread seems to be the resolution, though I abhor putting things in the GAC – especially for nonsensical problems such as this.

“As a matter of fact I'm working with VS2012. I just tried this on a virtual and

ran into this issue. Installing log4net.dll into to the GAC solves this issue

for me.”

I have to admit, this kind of thing drives me nuts. To make matters worse, this has been a problem for a LONG time. C’mon Microsoft, I know you can fix this kind of thing…now get cracking!

/smh

Thursday, April 10, 2014

Evolving to be more productive

http://www.hanselman.com/blog/ScottHanselmansCompleteListOfProductivityTips.aspx

I plan on trying to evolve towards this. The willingness to drop the ball is a hard thing though. I feel strongly compelled to do more with what I have. Perhaps by being willing to let go of the things that aren't that important that it will leave me with a higher value day in and day out.

This blog is a great example. It's not that I don't find it important to blog - I do. But I've constantly found myself deprioritizing it to the point that it never happens.

I think this blog can be a tool to help me to gather my thoughts and focus my attentions on the technologies, designs, and general learning that I need to focus on in order to continue to improve and to evolve. Hopefully in the process of doing that, others may find some nugget of usefulness there too.

After Windows 8.1 Update USB3 Broken

It doesn't appear that I'm the only one that has experienced this. I did however find a pretty easy fix for it. Simply go into Device Manager and you should see that the icon for the USB3 hub has a problem due to the "!" icon.

Uninstall the drivers for that hub and be sure to check the checkbox to delete the files from your computer. Then Scan for Hardware changes and it will automatically detect and reinstall the drivers for that from the Internet.

Drivers are healthy again and now I can access the drive.

Good Luck!

Monday, November 25, 2013

Upgrading to Visual Studio 2013

I've purchased my Resharper v8.x upgrade and I'm set to go.

Once the uninstall of VS 2012 completed, which surprisingly didn't prompt me for a reboot, I began the installation of VS 2013. With a full install this projects using 12GB of space - not a small app by any stretch.

Now as the VS 2013 installer wraps up, it let me know that Hyper-V is turned on and that it added me to the Hyper-V Administrators group. It looks like this was necessary for Windows Phone Emulator support.

After a lengthy reboot due to updates that get applied to Windows, Visual Studio was ready to run. One interesting thing to note was the introduction of a sign-in process now that looks to support sync'ing settings across development machines.

I was initially stymied by some errors while trying to sign-in. A quick reboot and restart of Visual Studio and the issues were resolved. Once logged in, your settings are associated to your Microsoft account and will follow you from one development machine to another.

Saturday, November 23, 2013

Trying to Decide On What's My Next Game Console

With the holidays coming up, I am beginning to wonder if it isn't time for a new gaming console. I have both an Xbox 360 and a PS/3. The latter was purchased originally as a player for Blu Ray and secondarily as a gaming console. To be honest, the games I have for the PS/3 are very few. As a gaming platform it just never took off; at least not for me. The Xbox 360 on the other hand offered good games. So alas I ended up with two consoles. Not my preference but done out of necessity to get the best of both worlds.

So now I'm back to my quest for deciding between the latest/greatest platform. Should I get the Xbox One or the new PlayStation? Not sure yet, but given that the Xbox will play Blu Ray tells me I can consolidate down to one console. Finally - Yes! I'll be doing some more reviewing and considering, but I know me - in the end I'll probably stick with Xbox because let's face it - I pretty much use every other piece of Microsoft technology out there so what's one more?

Edit:

Seems that Vudu.com is helping to make my decision. They are an Xbox One partner, so delivery of my cloud movies is now available via the Xbox. Nice!

Friday, November 22, 2013

Higher Education Adoption of the Cloud

While I agree with the general premise of the article regarding the rate of adoption of the cloud by higher ed, I would argue that the use of cloud platforms will not just be driven by LMS usage, but will extend much further across their enterprises. Student information systems, housing systems, CRM, etc. will also drive them into the cloud - particularly where commodity platforms are much more cost effectively operated there.

Our customers are tech-savvy folks, but they are also pragmatic. The single biggest impediment to adoption, in my opinion, isn't the desire to move to the cloud, but is instead it's maturity. As the next generation of services are made available and stability and reliability go up, that's where you'll see the growth of adoption take off.

Bottom line: Until public cloud providers dramatically improve their product stability and make it a true value add you won't see the education sector move to it in large scale.

Thursday, November 21, 2013

Preparing for the Cloud

In this post I'm going to discuss three common pitfalls that you should be wary of when you are planning on putting your first application into the cloud. In this post, I'll be focusing on Windows Azure, but the points I make are equally applicable to other public cloud providers.

If you're like many people that have not yet put a production application into the cloud, then you may have a lot of questions about what that means. Developing apps for the cloud often uses tools and languages that you're already familiar with. However, the approach that you take to designing your app should be significantly different.

The cloud is a highly distributed environment. The resources that you depend on will likely not reside on the same host or even be in the same rack or data center that your app is running. Much of the infrastructure that supports a cloud provider's resources is highly redundant and configured for high availability. But that doesn't mean that blips in connectivity and availability don't occur. Sometimes those blips can extend long enough to become bleeps - usually from you at 2 a.m. when you get that support call.

In spite of the best efforts of cloud providers to provide five 9's of service to you, the reality is something much less. Windows Azure currently runs at a rate of around three 9's of availability. What does this mean to you as the consumer of those services? It means you need to think redundancy. For instance, if your app has a requirement for high availability, you should consider building out your deployments to be in more than one data center. Windows Azure provides tools to help with this. Whether you are using Platform as a Service or Infrastructure as a Service VMs, it is very straightforward to deploy and configure multiple instances of your application functionality in more than one data center. Using a service such as Windows Azure Traffic Manager will allow you to stand up a highly available load balancer in front of your web application or web services quickly and easily. Depending on your needs, you can configure WATM to run in a fail over or round robin mode. The latter allows you to get some benefit out of your backup deployments. Don't forget to factor into your budget the increased cost for additional hosted services that you stand up for redundancy.

Windows Azure and the services you have access to are multi-tenant. Throttling is a way of life in the cloud and is used to ensure that the environment is not overrun with load. IT administrators are very aware of the effects that a VM instance can have on its host when it is allowed to consume too many resources. It usually means resource starvation for other VMs on that host. Throttling limits are set by the cloud provider in their infrastructure in order to limit the effects of resource starvation. They are out of your control. The single most important thing to remember about throttling is that it is meant to protect the cloud provider's resources - not your app. The effects of throttling can be insignificant or they can be debilitating depending on the way your application is designed. In severe cases, resources that you depend on may be temporarily unavailable due to throttling. Just like service outages, throttling can appear to your app that a critical service is unavailable or experiencing transient errors. One way to mitigate this is to make the most efficient use of resources possible in this environment and to employ caching wherever possible to minimize the number of trips necessary to expensive resources such as the database.

This post provides a high-level view of some of the pitfalls that application developers can run into when deploying their application into the cloud. Follow-on posts will tackle these in detail and look at specific ways to mitigate them.

Thursday, November 15, 2012

Resolved error “The following system error occurred: No mapping between account names and security IDs was done.”

After getting our new TFS 2012 server built out I found myself unable to grant permissions to the Tfs_Analysis database in SQL Analysis services. When attempting to add a new user of the local Active Directory domain to the TfsWarehouseDataReader role I received the above error.

I found that the role membership contained a user that was unresolvable via Active Directory and showed up as a SID only (no domain\name). Removing that unresolved user allowed me to add new groups to the role from the local AD.

It would appear from this that the logic is revalidating all of the users and groups that are listed in the membership role list when adding/removing members.

Sunday, September 2, 2012

Installing Visual Studio 2012 on Windows 8

I’ve run into a few quirks installing Visual Studio 2012 on Windows 8. The first is that the ISO image doesn’t appear to use the NTFS file system and Windows 8 will not mount the image because of that. Fortunately, my Win8 install is a VM which allows me to mount the image on my host PC and run from there.

Once I ran it that way, the installer started and ran partway through; however, it ended prematurely with an error that it couldn’t find a required object. Looking through the error logs didn’t give me enough detail to resolve it. When I clicked the provided link to look for common solutions, I found others had the same issue (with screenshots to match).

Right now, the only solid way that many people have come to install is to use the Web installer. It works but it’s slow. If I find an update that solves the .ISO install problem, I’ll post an update here.

Monday, August 13, 2012

Motorola S9-HD Headphone Continual Beeping - FIX

Recently my Motorola S9-HD Bluetooth headphones started doing something annoying. It started on a bike ride where I really wanted some music. Periodically they began a long continual series of beeps. If I pressed the power button the beeps would stop, but not for long. Un-pairing and re-pairing them didn't change anything.

What I found that did work is to completely drain the battery. Completely...meaning leave them on until they power off, then turn them back on until they turn off again. I then did a full charge on the battery. I found that I had to repair them again to my phone - not sure if that's normal or not. Once I did that, they started working again properly.

So far so good and the problem has not returned.

** EDIT **

Not long after I posted this, the problem returned. The best I can determine, the headphone batteries weren’t holding a good charge. I ended up putting them in my pile of recycle. Now I’m on a quest to find a suitable replacement. I’ve looked at the next model up from Motorola the S10’s – I might go with them.

Sunday, July 22, 2012

The Road Back

Friday, April 20, 2012

Preparing for Predictive Analytics - Data is the Key

The Basics

As I mentioned in my last post, I’ll be making a series of posts on some of the challenges that you will face when embarking on a predictive analytics project. In this post, I’m going to focus on what may be obvious to most, but frequently has proven to be a challenge for the customers we have worked with. Namely having ready access to the required data.

Your organization’s data is the key to a successful predictive analytics project. Quality historical data is required in order to build models that will let you make predictions about the likelihood of some event or behavior. Not all data is relevant in all modeling scenarios, but generally the more information you have the better. The modeling exercise will weed out the noise from the signal. Some modeling techniques are better than others at dealing with the noise as well. Familiarity with the capabilities of your tool is very important in this context.

Cataloging Your Data

How many of you know all the different kinds of data used in your company? My experience has been that most organizations have a lot of data in silos that are not well documented and certainly not well integrated with other corporate data. This data can range from duplicate customer data, to sales data, to communication data such as email logs, marketing data, or other data needed to GSD (Get Stuff Done). Master data management projects can help your organization centralize, de-duplicate, and cleanse that data, but the reality is that these kinds of projects can take years to complete and are very complex. Minimally, it would be helpful to begin with a data cataloging project to at least get your arms around the data that your organization has. Start with the basics. Identify the types of data you have and who is responsible for maintaining it. Make note of where the data lives; i.e., what tool/platform was the system developed in. Besides laying the ground work for a master data management project down the road, this will be extremely valuable in your predictive analytics projects because it will outline where you need to go to get the information you want to model.

Does It Matter How the Data is Persisted?

The answer to this is highly dependent upon the tool or platform you are using in order to create your models and then to subsequently score the data. If you are using a commercial tool, your choices are limited by what that tool needs. Generally, the best answer is to bring the data together into a consistent storage medium. Whether that’s a relational database, a data warehouse, flat files, or XML files, the important thing is that the data can be accessed and interrogated as a set. Efficient set based operations are critical to the performance of your analytics solution. Many of the modeling activities will involve slicing, dicing, counting, aggregating, and transforming your data set in numerous ways. This can be a very slow process if your data is not stored in a way that supports those kinds of activities.

Analytics Repositories

My recommendation is that whenever possible you should try to collect your data into a centralized analytics repository. With smaller data sets this is much more approachable and is a common way to do it. However, with very large scale enterprise data this can be expensive, time consuming, and impractical. The time to update the repository can make timely model analysis impossible especially if you are trying to model transactional data that is quickly being added or changed.

Wrapping Up

In my next post, I’ll be discussing two different approaches to designing analytics repositories that address these two scenarios. The first approach is to use the simpler relational repository to store the data set. The second approach is to use a virtualized metadata-driven repository, which can be extremely useful in the larger scale enterprise settings.